Nvidia Stock Price Forecast - NVDA at $181 Is the Cheapest It Has Ever Been — 73% Revenue Growth

NVDA trades at just 22x forward earnings after Q4 operating margins hit an all-time high of 65%, gross margins return to 75%, and Jensen Huang teases a chip that "will surprise the world" | That's TradingNEWS

Nvidia Stock (NASDAQ: NVDA) at $181 — The Lowest Valuation in Company History, a $20 Billion LPU Gamble, and GTC 2026 as the Reset Catalyst

Nvidia (NASDAQ: NVDA) Is Trading at 22x Forward Earnings After 73% Revenue Growth — The Market Has Never Priced This Stock This Cheaply

Nvidia Corporation (NASDAQ: NVDA) trades at $181.16 on Friday, March 13 — down 1.08% on the session and sitting in a range that represents the lowest forward earnings multiple the stock has carried in its entire modern history as an AI infrastructure company. At $181 to $182 per share, NVDA trades at approximately 22 times forward earnings — a multiple that would be unremarkable for a mature industrial company growing at 5% annually but is genuinely extraordinary for a company that just delivered 73% revenue growth in Q4 fiscal 2026, guided for 77% revenue growth in Q1 fiscal 2027 with $78 billion in quarterly revenue guidance, and is operating at all-time high operating margins of 65%. The 52-week range spans $167.02 at the low to $207.04 at the high — the current price at $181 sits in the lower half of that range, approximately 12.6% below the 52-week high, at a moment when the company's fundamental trajectory has never been stronger.

The market cap implies a total enterprise value that prices in a significant deceleration from current growth rates — a bet that the AI infrastructure supercycle that has driven NVDA from a $2.2 trillion valuation in 2022 to over $4.5 trillion at peak is approaching an inflection that turns brutal. That bet may ultimately be correct on a multi-year basis, but it is being made at a point in the cycle — GTC 2026 approaching, the Rubin architecture beginning production ramp, a $20 billion Groq LPU acquisition poised for its first product announcement, and sovereign AI spending tripling to $30 billion in fiscal 2026 — where the near-term catalyst picture is as dense as it has been in three years. The Wall Street consensus disagrees with the market's pessimism: Strong Buy at 4.71 out of 5 — the highest conviction rating in the analyst universe for any major semiconductor name.

Q4 Fiscal 2026 Numbers That Should Have Sent the Stock Higher — And the One Answer That Didn't

Nvidia's (NASDAQ: NVDA) Q4 fiscal 2026 earnings were, by any objective measure, one of the most impressive quarters a semiconductor company has ever reported. Total revenue hit $68 billion — a figure that includes $62 billion from data centers alone. Revenue growth was 73% year-over-year. EPS growth was 98%. Gross margins returned to 75% — the highest level since calendar Q1 2024, beating fears about TSMC price increases and margin compression. Operating margins reached an all-time high of 65%, reflecting a degree of operating leverage that no hardware company in history has generated at this scale. Guidance for Q1 fiscal 2027 was $78 billion in revenue at sustained 75% gross margins and continued record operating margins — guidance that implies 77% year-over-year growth and absolutely obliterates the consensus expectation.

The stock fell anyway. NVDA lost approximately 9% in the sessions following earnings and has drifted further since. The conventional explanation — that investors are concerned about hyperscaler ASICs cannibalizing Nvidia's GPU business — is wrong. The actual Q4 data on customer concentration refutes the ASIC narrative directly: the two largest customers in Q4 accounted for 36% of data center revenue combined, and no other single customer exceeded 10%. CEO Jensen Huang confirmed that approximately 50% of data center revenues are now coming from non-hyperscaler customers — enterprises, model developers, sovereign AI programs, and neoclouds. At 50% non-hyperscaler revenue, the ASIC risk from Google, Amazon, and Microsoft custom silicon is a real but bounded risk to the hyperscaler half of the business, not an existential threat to the full enterprise.

The actual reason the stock fell is more subtle and more important than the ASIC narrative. During the Q4 earnings call, an analyst asked Jensen Huang directly about hyperscaler capital spending growth — specifically whether customers whose capex is already at 100% of operating cash flow can continue to grow their AI hardware purchases in 2027 and beyond. Huang's answer — "compute is revenues, without tokens there's no revenue growth, I am confident in their cash flow growing" — inadvertently confirmed the core concern rather than neutralizing it. By tying future hyperscaler capex growth to their operating cash flow growth, Huang essentially conceded that the era of hyperscaler capex escalating above operating cash flow growth (by increasing capital intensity toward 100%) is ending. Amazon, Alphabet, Meta, and potentially Microsoft are already at or approaching 100% capex-to-operating-cash-flow ratios in 2026, with $650 billion to $700 billion in combined AI capital expenditure. Future growth from this cohort is bounded by their revenue growth, not by their willingness to increase capital intensity further. That is the real message that spooked the market — not ASICs, but the ceiling on hyperscaler spending momentum.

The $20 Billion Groq Deal and the LPU That Jensen Huang Said Will "Surprise the World"

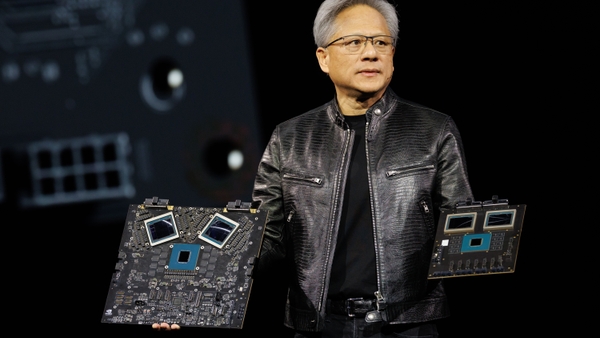

On February 19, 2026, Jensen Huang told the Korea Economic Daily that Nvidia would unveil "a chip that will surprise the world" at GTC 2026 next week. That statement, combined with what Huang said on the Q4 earnings call — "what we'll do with Groq is you'll come to see GTC, but what we'll do is we'll extend our architecture with Groq as an accelerator in very much the way that we extended NVIDIA's architecture with Mellanox" — provides the clearest possible directional signal about what GTC 2026's headline announcement will be.

Nvidia (NASDAQ: NVDA) concluded a $20 billion deal with Groq in December 2025. Groq was founded in 2016 by ex-Google engineers including Jonathan Ross — one of the designers of Google's first AI chip — and developed the Language Processing Unit, or LPU: a chip architecture purpose-built for inference workloads, specifically the sequential, multi-step reasoning loops that define agentic AI. The deal structure: a non-exclusive licensing of Groq's entire inference technology IP, combined with an acqui-hire of 90% of Groq's talent including founder Jonathan Ross and president Sunny Madra. At $20 billion — approximately 0.5% of Nvidia's market cap at the time of acquisition — this was an extraordinarily cheap insurance policy against the single greatest risk to Nvidia's long-term data center moat: the secular shift from AI training (where GPUs dominate) to AI inference (where GPUs are structurally less efficient).

The architectural difference between an LPU and a GPU matters enormously for agentic AI workloads. A GPU — Nvidia's traditional product — uses external high-bandwidth memory (HBM) attached via CoWoS packaging. When processing sequential inference tasks, the GPU's compute cores sit idle 60% to 70% of the time waiting for data to arrive from external HBM. The Groq LPU solves this with massive on-chip SRAM and VLIW architecture — keeping all essential data locally accessible and eliminating the memory-fetching latency that makes GPUs inefficient for agentic workloads. The result: 5x to 10x more efficient token generation per second for sequential, low-batch agentic inference tasks compared to current GPU architectures. For applications where an AI agent is making hundreds of sequential reasoning passes per user request — planning, executing, evaluating, iterating — the LPU's efficiency advantage over the GPU is not incremental. It is structural.

Four independent supply chain signals confirm that the GTC mystery chip is an LPU rather than a conventional GPU: Nvidia's GPU release schedule follows a 2-year architecture cycle (Ampere 2020, Hopper 2022, Blackwell 2024, Rubin 2026) with no room for a surprise GPU insertion; TSMC CoWoS capacity warnings are stable and attributable to known Blackwell Ultra and Rubin ramps rather than any mystery new product; HBM4 supply is fully booked for 2026 with no reallocation spikes that would suggest a new GPU pulling from the Rubin allocation; and there are no reports of mystery wafer bookings at TSMC's advanced 3nm or 2nm nodes that would be required for a new flagship GPU. Groq's LPUs have historically used older nodes — the v1 LPU used GLOBALFOUNDRIES 14nm and the v2 used Samsung's SF4x 4nm — and an LPU announcement would require neither CoWoS capacity nor HBM4 allocation nor bleeding-edge node capacity. Every absence of a signal that should exist if this were a GPU is instead a positive signal for the LPU thesis.

Agentic AI: $100 to $150 Billion Inference Market Today, 10x Potential Ahead

The strategic logic behind the Groq acquisition and the LPU announcement makes complete sense when mapped against where AI compute demand is heading. Jensen Huang has repeatedly called the current moment the "agentic AI inflection" — the transition from generative AI (which produces a single response per query) to agentic AI (which triggers 10 to 100 inference passes per task as an AI agent plans, executes, evaluates, and iterates toward a goal). This shift from single-pass to multi-pass inference is not a marginal increase in compute demand. It is an exponential multiplier.

The total AI market today is estimated at approximately $525 billion. Of that, the inference compute segment represents approximately $100 to $150 billion — the portion driven by serving AI model responses to end users and applications. Hyperscalers' combined $650 billion to $700 billion in AI capital expenditure for 2026 has been built with inference demand in mind: the Futurum Group estimates approximately 75% of that spend — $450 billion to $500 billion — is explicitly linked to preparing for inference-heavy and agentic workloads. Goldman Sachs corroborates this, noting that consensus capex estimates have risen to over $500 billion with the majority focused on scaling reasoning-led inference infrastructure.

The commercial agentic AI market — tools, platforms, software, and applications — is currently estimated at $5 billion to $8 billion in 2026 and is projected to grow at 40% CAGR, reaching $96 billion to $139 billion by 2034 depending on the estimate source. But the larger opportunity is the inference compute infrastructure market itself, which has the potential to reach 10x its current $100 to $150 billion size as agentic AI scales. Every dollar of cost reduction in per-token economics — which the Rubin platform delivers at 10x lower cost per token versus Blackwell, and which the LPU architecture extends even further — creates an opex flywheel: lower cost per token enables more token generation at the same budget, which creates more AI outputs, which generates more hyperscaler revenue, which funds more hardware purchases. The inference opex flywheel is the mechanism that converts agentic AI hype into sustained recurring demand for Nvidia's data center infrastructure.

Read More

-

SPYI ETF Yields 12.14% at $50.62 — 42 Straight Monthly Distributions, $8 Billion in AUM, and a VIX Surge That Makes This S&P 500 Income Machine More Powerful

13.03.2026 · TradingNEWS ArchiveStocks

-

XRPI at $8.02 and XRPR Surges 3.72% to $11.72 — Goldman Sachs Built a $153.8 Million XRP ETF Position

13.03.2026 · TradingNEWS ArchiveCrypto

-

Natural Gas Price Forecast - NG at $3.15 While the World Pays $22.50 — Europe's Storage Hits a 4-Year Low

13.03.2026 · TradingNEWS ArchiveCommodities

-

Stock Market Today: Dow Jones, S&P 500, and Nasdaq Bounce — But GDP Collapses to 0.7% and Adobe Crashes 8% on CEO Shock

13.03.2026 · TradingNEWS ArchiveMarkets

-

USD/JPY Price Forecast - Pairs at 159.39 — 61 Pips From Japan's Intervention Zone and Standard Targets 162

13.03.2026 · TradingNEWS ArchiveForex

Q4 Revenue Composition: Two-Thirds of Data Center Revenue Is Already Full-Stack Grace Blackwell Systems

Nvidia's (NASDAQ: NVDA) Q4 fiscal 2026 data center revenue of $62 billion — within the $68 billion total — is not primarily individual GPU chip sales anymore. Two-thirds of that $62 billion came from Grace Blackwell full-stack systems — complete AI computing infrastructure including GPUs, CPUs, networking interconnects, and software. That is the most important data point in the entire quarter for understanding where Nvidia's competitive moat is actually located.

When customers buy Grace Blackwell systems rather than individual GPUs, they are buying into Nvidia's topology, architecture, networking stack, and software ecosystem simultaneously. They are buying NVLink, Spectrum-X, and InfiniBand alongside the GPU — and the networking revenue reflects this: $11 billion in networking revenue in the quarter, proving that NVLink, Spectrum-X, and InfiniBand have become as strategically important as the GPU itself in large AI cluster deployments. A competitor that builds a faster GPU than Nvidia does not automatically displace the Grace Blackwell system, because the GPU is only one component of a system that the customer has optimized their entire data center architecture around. This is the Mellanox playbook replayed at a $62 billion quarterly revenue scale — and it is why the ASIC threat, while real, is more bounded than bears claim.

The demand composition is also broadening in ways that reduce the hyperscaler ceiling risk. Sovereign AI spending tripled in Nvidia's fiscal 2026 to over $30 billion — governments treating AI infrastructure as they treat electricity and telecommunications, building national AI capacity with Nvidia systems. Enterprise and model developer demand is growing alongside hyperscaler spending, not as a replacement for it but as a parallel revenue stream. The 50% non-hyperscaler revenue in Q4 is not a small footnote — it means that even if hyperscaler capex growth decelerates to match operating cash flow growth, the non-hyperscaler half of the data center business can grow faster and offset the slowdown.

Rubin Architecture, CoWoS Packaging, and the Production Ramp That Avoids the Transition Lull

Nvidia (NASDAQ: NVDA) has already delivered samples of its Vera Rubin architecture and expects production to begin in the second half of 2026. The significance of the Rubin timing relative to the Blackwell deployment cycle cannot be overstated. Most semiconductor companies experience a revenue lull during architecture transitions as customers pause purchases to wait for the new generation. Nvidia is structuring Rubin's production ramp to overlap with Blackwell's continued deployment — avoiding the transition lull by ensuring both architectures are generating revenue simultaneously during the transition period.

Rubin's critical performance differentiation: 10x lower cost per token compared to Blackwell, driven by HBM4 integration and architectural improvements in tokens-per-watt efficiency. Jensen Huang made the unit economics explicit on the earnings call: "The single most important lever of our gross margins is actually delivering generational leads to our customers... If we could deliver generationally performance per watt that exceeds dramatically what Moore's Law can do... then we can continue to sustain our gross margins." That statement is a commitment that 75% gross margins are defensible through Rubin and beyond — not as a static achievement but as a dynamic outcome of continuously delivering performance improvements that justify the pricing premium.

Power constraints are replacing chip availability as the primary bottleneck in AI infrastructure scaling. Data centers have evolved from being processor-constrained to being power-constrained — the limiting variable is no longer the availability of GPUs but the availability of electricity to run them. The company that delivers the highest performance per watt becomes the preferred vendor in a power-constrained environment regardless of absolute compute performance. Nvidia's roadmap commitment to generational performance-per-watt improvements is specifically designed to maintain its preferred-vendor status in a world where power is the scarce resource. SK Hynix has announced dedicated HBM4 facilities tied to Rubin timelines, Samsung has received HBM4 certification for Rubin integration, and Micron confirmed HBM4 capacity for 2026 is sold out with initial shipments already commencing a quarter ahead of schedule — confirming that the supply chain is fully aligned behind Rubin's production ramp.

ByteDance, Samsung NAND, and the China Wildcard That Nvidia Has Already Excluded From Guidance

Nvidia (NASDAQ: NVDA) has assumed zero data center compute revenue from China in its forward guidance — a conservative assumption that both protects the company from regulatory surprises and makes every Chinese revenue dollar an unmodeled upside option. The ByteDance development reported this week — ByteDance gaining access to Nvidia's top AI chips despite China export restrictions through procurement in Malaysia — is a reminder that demand for Nvidia chips in China has not disappeared; it has been redirected through alternative channels that neither Nvidia nor the US export control framework can fully prevent.

The Nvidia-Samsung partnership to develop next-generation NAND memory chips represents a separate strategic initiative: diversifying memory supplier relationships and developing memory architectures optimized for AI inference workloads where NAND's density and bandwidth characteristics differ from HBM's. Samsung's involvement in both NAND development and HBM4 supply for Rubin positions the Korean memory giant as a more comprehensive Nvidia partner than at any previous point in the relationship — and for Nvidia, diversifying HBM supply beyond SK Hynix while developing differentiated memory solutions for inference workloads is strategically essential as inference's memory access patterns diverge from training's.

The geopolitical risk from the Iran war and its economic consequences has created a macro environment that is broadly negative for technology stocks — NVDA down 1% on Friday is consistent with the broader Nasdaq (COMP) under pressure as oil at $94 per barrel and core PCE at 3.1% eliminate near-term Fed cut expectations. But Nvidia's data center business is fundamentally insulated from oil price shocks in a way that consumer-facing technology businesses are not. Hyperscalers and enterprises do not cancel AI infrastructure orders because gasoline costs more. They may slow the pace of escalation — which is exactly what the hyperscaler cash flow ceiling story implies — but the underlying demand for AI compute infrastructure is driven by competitive necessity, not discretionary budget, and competitive necessity does not respond to oil prices.

Valuation at 22x Forward Earnings: The Cheapest NVDA Has Ever Traded on This Metric

Nvidia (NASDAQ: NVDA) at $181 trades at 22 times forward earnings — the lowest forward P/E multiple in the stock's modern history as an AI company. To contextualize that number: NVDA was trading at 30x to 40x forward earnings for most of 2024 and early 2025, at a time when the data center revenue trajectory was less certain than it is today. The market is now pricing 22x forward earnings for a company guiding $78 billion in Q1 fiscal 2027 revenue at 77% year-over-year growth with 75% gross margins and 65% operating margins. That is not how rational markets price dominant secular growth franchises.

The DCF-based price targets from the most detailed analyses of NVDA's financial trajectory converge on a range that implies substantial upside from current levels. A base case analysis using a 5-year revenue CAGR of 26% through FY2031 with continued operating leverage produces a $197 per share base case — 8% upside from Friday's close. An upside scenario incorporating the LPU/agentic AI inflection with a 28% 5-year revenue CAGR and 30% earnings CAGR produces a $208 per share target — both scenarios using a 9.9% WACC and a 1.5% perpetual growth rate on FY2031 EBITDA for terminal value.

The most aggressive institutional target sits at $245 per share, derived by applying a 23x multiple on 2027 estimates — a target that implies 35% upside from the current $181 price. That target is based on the judgment that Nvidia will grow revenues above 20% annually for the rest of this decade — a threshold that seems conservative given the company is currently guiding 77% growth and the forward consensus still implies 20%+ growth well into the late 2020s even after accounting for the hyperscaler capex ceiling thesis.

The analyst rating consensus reflects this conviction: Wall Street carries a Strong Buy at 4.71 out of 5 — the highest score in the semiconductor sector. SA Analysts maintain a Buy at 4.00. Only the Quant framework dissents with a Hold at 3.48 — a systematic model that processes the near-term earnings uncertainty and macro headwinds without weighting the GTC catalyst, the LPU optionality, or the structural inference demand inflection. For insider transaction details and executive activity at current price levels, the full record is available at Nvidia's insider transactions page and stock profile — executive buying at current levels would be the strongest possible confirmation of the bullish thesis.

Nvidia (NASDAQ: NVDA) Verdict: STRONG BUY — Target $208 Near-Term, $245 on 12-Month View, GTC as the Catalyst

Nvidia (NASDAQ: NVDA) at $181 is a STRONG BUY. The post-earnings selloff of approximately 9% has created an entry point that the fundamental data does not justify. The company delivered 73% revenue growth, 98% EPS growth, 75% gross margins, and 65% operating margins while guiding 77% growth for the next quarter — and the stock trades at 22x forward earnings, its cheapest historical multiple. The hyperscaler capex ceiling concern is valid as a 2027-and-beyond growth moderator but does not change the near-term trajectory of a company with $78 billion in Q1 revenue guidance and a product roadmap — Rubin in H2 2026, LPU at GTC — that addresses every bear concern simultaneously.

GTC 2026 next week is the most consequential near-term catalyst for NVDA since the launch of the H100. If Jensen Huang unveils a Groq-derived LPU at the keynote — which the supply chain signals, the earnings call language, and the Korea Economic Daily tease all point toward — it resolves the three concerns that drove the post-earnings selloff: it provides the fresh narrative (agentic AI infrastructure), it extends the growth runway (the $100 to $150 billion inference market with 10x expansion potential), and it strengthens the moat (purpose-built inference architecture that hyperscaler ASICs are not designed to replicate). An LPU announcement is the difference between the $197 base case target and the $208 upside target — with the $245 12-month target reflecting sustained execution through the Rubin transition. Use the current macro-driven weakness as the entry point. The next move in NVDA is higher.